Cognitive Surrender: The Real AI Threat Nobody Is Talking About

Reports of the death of jobs may be premature

We are currently drowning in a sea of crazed AI pronouncements and a lot of very expensive crap.

In 2024, Anthropic CEO Dario Amodei famously predicted AI would kill all jobs by 2025. It is now 2026. Entry level jobs are still here. Amodei’s prediction may come true or may be him telling investors he’ll be king of the world and hoping for giant payday. In any event, the idea that robots will be replacing or ordering us around brings us to a new term: Cognitive Surrender.

It describes our growing habit of deferring to an LLM, even when we know it is wrong, simply because it sounds authoritative and produces beautiful slides. It is the high tech version the well dressed consultant showing a nonsensical deck. Dress nonsense in a tuxedo and people will apparently believe almost anything.

The examples are already piling up. Deloitte billed the Australian government AU$440,000 for a report that turned out to be largely AI generated, parroting attorneys who have fabricated court rulings and non existent academic citations. Air Canada’s chatbot invented a bereavement discount policy that did not exist, and when sued for the discounts, the airline actually tried to argue the bot was a separate legal entity responsible for its own actions. Academic publishing has been flooded with AI generated slop. The 2026 ICLR conference saw a 70% surge in academic paper submissions, nearly 20,000 papers, far more than human researchers can actually peer review. Humans can’t sort through that pile of silicon generated bullshit so AI’s are poised to review the work of AI’s.

In each case the output looked authoritative. In each case someone surrendered their judgment to a machine, either because they were forced to or thought the machine must be right … and paid for it.

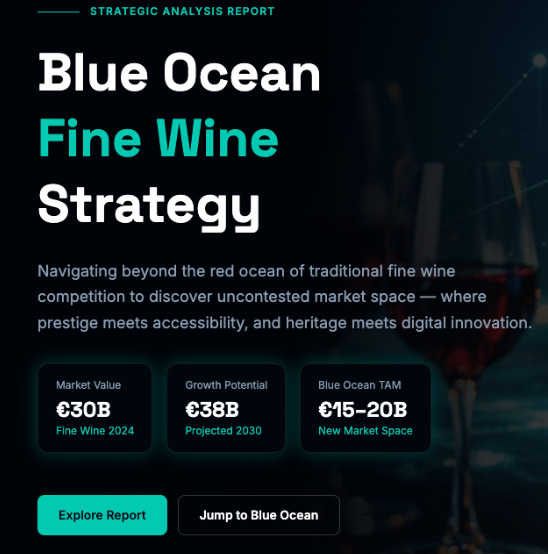

The €38 Billion Hallucination

Last week I tested Manus, the tool Meta bought for billions in late 2025, using the fine wine market as my test case. Having worked directly with the authors of Blue Ocean Strategy and taught the bestselling case on the subject for over a decade, I know this territory.

Manus spent ten full minutes thinking before proudly projecting €38 billion in growth by 2030.

Its brilliant insight? Treat wine like a collectible market.

I happen to have written a bestselling case about Marvel’s plunge into bankruptcy in the 1980’s. I know exactly what happens when you try to pivot a consumer product into a collectible play: you go bankrupt. For the wine market, where the Wine Market Council 2026 data shows overall consumption is down and 35% because Millennials simply do not like the taste of wine, projecting a €38 billion in growth should taste off, like a bottle with a bad cork. Besides, wine as collectible isn’t exactly new.

Honestly, anyone predicting that kind of growth in a shrinking demographic sounds like they have been sampling too much of the product themselves. The slides looked fantastic. The analysis sounded like something from a comic book.

The Fork in the Road

When I ran the exact same prompt through ChatGPT, it took four seconds to spit out exactly 800 words of generic filler (what a coincidence on the precise word count .. not). Just a wall of bland text designed to make you feel like the work was already done.

The irony is brutal: the doom and gloom predictions might actually come true, not because the machines become sentient, but because they make humans confidently incompetent. Or simply lazy.

Type a question, receive a polished and confident nonsense; hand your thinking over to the machine. The other, our approach at VSTRAT, treats AI as infrastructure for human judgment, interactive, grounded in data, forcing you to actually think. Plenty of people take the lazy path even telling others that’s the correct way to do things.

The cost of choosing wrong is not just a bad strategy deck. Business schools, for example, that cut rigorous thinking tools to save a few hundred euros, replacing them with systems that produce confident beautiful nonsense, are training the next generation to surrender cognitively before they even start. That is the jobs story nobody is telling. Not AI replacing humans, but humans surrendering what makes us human to replace themselves.

We know that living in an opium fog may be nice (I’ve never tried it myself but who knows…) but we also know it isn’t a good idea. Yet we’re, as humanity, heading down the same path.

Look to the Light Table

Recent history already gave us the better model.

Not long ago, graphic designers worked with Exacto knives, light tables, and physical templates. When desktop publishing arrived, the experts predicted mass unemployment. Instead, we saw an explosion in the industry. The tools lowered the floor so anyone could make a flyer, without lowering the ceiling for people who actually understood what good design looked like.

The same thing is happening now. AI can augment us. I use it as an editor: I write every piece by hand, then ask the LLM to act as a ruthless thought partner on structure and clarity. It is a fine editor. It is a terrible strategist. If I asked it to think from scratch, it would still tell me the wine market is a gold mine.

When I digress, as humans do, the machines also tell me to get back to task even if it’s an evening or weekend. They’re not very empathetic task masters, which makes sense given they’re robots with no emotions.

This worry isn’t new. When I first learned to program as a teenager decades ago I created a poem generator that took words from a list and randomly put them together. I kept creating new poems until one made sense and even submitted it to a poetry journal. I did receive a response - a few choice not very poetic words - and if that journal is still in existence I’m apparently banned for life.

A final note: do not blame the AI. Blaming a machine for cognitive surrender is like blaming a car for driving off the road. The car did not let go of the wheel. You did.